Rodrick Wallace1

(1)

New York State Psychiatric Institute, New York, NY, USA

Summary

The cross-sectional decontextualization afflicting contemporary neuroscience—attributing to “the brain” what is the province of the whole individual—is mirrored by an evolutionary decontextualization exceptionalizing consciousness. The living state is characterized by cognitive processes at all scales and levels of organization. Many can be associated with dual information sources that “speak” a “language” of behavior-in-context. Shifting, tunable, global broadcasts analogous to consciousness, albeit far slower—wound healing, tumor control, immune function, gene expression, etc.—have emerged through repeated evolutionary exaptation of the crosstalk and noise inherent to all information transmission. These broadcasts recruit “unconscious” cognitive modules into shifting arrays as needed to meet the threats and opportunities that confront all organisms across multiple frames of reference. The development is straightforward, based on the powerful necessary conditions imposed by the asymptotic limit theorems of communication theory, in the same sense that the Central Limit Theorem constrains sums of stochastic variates. Recognition of information as a form of free energy instantiated by physical processes that consume free energy permits analogs to phase transition and nonequilibrium thermodynamic arguments, leading to “dynamic regression models” useful for data analysis.

Nothing in biology makes sense except in light of evolution.

– T. Dobzhansky

1.1 Introduction

The neuroscientist Max Bennett and the philosopher Peter Hacker characterize contemporary neuroscience, and particularly consciousness studies, as fatally contaminated by a decontextualization that attributes to “the brain” functions and dynamics inherent to the whole individual, what they call the “mereological fallacy” (Bennett and Hacker 2003). It is possible, with some formal overhead, to evade this version of the fallacy by erecting a more comprehensive scientific structure that embeds the shifting, tunable global neural broadcasts of individual animal consciousness (e.g., Baars 1988, 2005) within a nested hierarchy of cross-sectional physiological and sociocultural phenomena (e.g., Wallace 2005, 2007; Wallace and Fullilove 2008; Wallace and Wallace 2013).

Unfortunately, another fatal manifestation of the fallacy is unaddressed. There remains the longitudinal decontextualization of consciousness from the body of evolutionary theory that is the foundation of contemporary biology. Was consciousness, then, sprung Athena-like and full-armed from the brow of an adaptationist Zeus some 500 million years ago? A rather different kind of “just so” story is needed that recognizes the necessary conditions imposed by the asymptotic limit theorems of communication theory on all biological and ecological processes involving the production and transmission of information.

Recall that information sources produce, and channels transmit, information, and that transmission and production are constrained by the Shannon Coding, the Shannon–McMillan Source Coding, and the Rate Distortion Theorems (e.g., Khinchin 1957; Ash 1990; Cover and Thomas 2006; Shannon 1959). These are as confining as the Central Limit Theorem is for sums of independent stochastic variates, as the Martingale Theorem is for repeated games of chance, and as the Ergodic Theorem is in converging time averages to cross-sectional means for a broad class of probabilistic phenomena.

Indeed, parallel to the neuroscience and philosophy debate is what Adams (2003) calls “the informational turn in philosophy”—explicit application of communication theory formalism and concepts to “purposive behavior, learning, pattern recognition, and…the naturalization of mind and meaning.” One of the first comprehensive attempts was that of Dretske (1981, 1988, 1992, 1993, 1994), whose work Adams describes:

It is not uncommon to think that information is a commodity generated by things with minds. Let’s say that a naturalized account puts matters the other way around, viz. it says that minds are things that come into being by purely natural causal means of exploiting the information in their environments. This is the approach of Dretske as he tried consciously to unite the cognitive sciences around the well-understood mathematical theory of communication…

Dretske himself (1994) writes:

Communication theory can be interpreted as telling one something important about the conditions that are needed for the transmission of information as ordinarily understood, about what it takes for the transmission of semantic information. This has tempted people…to exploit [information theory] in semantic and cognitive studies, and thus in the philosophy of mind.

…Unless there is a statistically reliable channel of communication between [a source and a receiver]…no signal can carry semantic information…[thus] the channel over which the [semantic] signal arrives [must satisfy] the appropriate statistical constraints of communication theory.

It is fruitful to redirect attention from the informational content or meaning of individual symbols—the province of semantics which so concerned Dretske—back to the statistical properties of long, internally structured strings of signals emitted by an information source that is “dual” in a certain formal manner to a cognitive process. Application of a variety of tools adapted from statistical physics produces a dynamically tunable punctuation or phase transition coupling interacting cognitive modules in a highly natural way.

As Dretske so clearly saw, this approach allows scientific inference on the necessary conditions for cognition, greatly illuminating formal models of consciousness and other processes within and across organisms without raising the eighth Century ghosts of noisy, distorted mechanical clocks inherent to dynamic systems theory. The technique permits extension far beyond what is possible from statistical mechanics treatments of neural networks. In essence, the method broadly recapitulates the General Linear Model (GLM) for independent or simple serially correlated observations, but on the far more complex behaviors of an interacting assembly of information sources, using the Shannon Coding, Shannon–McMillan Source Coding, and Rate Distortion Theorems rather than the Central Limit Theorem.

Later chapters will explore the implications of the Data Rate Theorem that links control and information theories.

Wallace (2010) has examined the central role of information transmission constraints in evolutionary process, but, going in the opposite direction, this analysis will show how the inherent “flaws” of noise and crosstalk provide hooks for exaptations, in the sense of Gould (2002), that enabled evolution of a broad spectrum of tunable physiological global broadcasts, of which consciousness is merely a late, if remarkably rapid, example.

The next sections provide case histories of phenomena that recruit underlying sets of cognitive processes—“unconscious” modules—into a larger whole, including the Baars model of consciousness itself. The various examples are, however, much in the spirit of Maturana and Varela (1980, 1992) who long ago understood the central role that cognition must play in biological phenomena.

1.2 Some Cognitive Global Broadcasts

Immune System

Atlan and Cohen (1998) have proposed an information-theoretic—and implicitly global broadcast—cognitive model of immune function and process, a paradigm incorporating cognitive pattern recognition-and-response behaviors that are certainly analogous to, but much slower than, those of the later-evolved central nervous system.

From the Atlan/Cohen perspective, the meaning of an antigen can be reduced to the type of response the antigen generates. That is, the meaning of an antigen is functionally defined by the response of the immune system. The meaning of an antigen to the system is discernible in the type of immune response produced, not merely whether or not the antigen is perceived by the receptor repertoire. Because the meaning is defined by the type of response there is indeed a response repertoire and not only a receptor repertoire.

To account for immune interpretation, Cohen (1992, 2000) has reformulated the cognitive paradigm for the immune system. The immune system can respond to a given antigen in various ways, it has “options.” Thus the particular response observed is the outcome of internal processes of weighing and integrating information about the antigen.

In contrast to Burnet’s view of the immune response as a simple reflex, it is seen to exercise cognition by the interpolation of a level of information processing between the antigen stimulus and the immune response. A cognitive immune system organizes the information borne by the antigen stimulus within a given context and creates a format suitable for internal processing; the antigen and its context are transcribed internally into the “chemical language” of the immune system.

The cognitive paradigm suggests a language metaphor to describe immune communication by a string of chemical signals. This metaphor is apt because the human and immune languages can be seen to manifest several similarities such as syntax and abstraction. Syntax, for example, enhances both linguistic and immune meaning.

Although individual words and even letters can have their own meanings, an unconnected subject or an unconnected predicate will tend to mean less than does the sentence generated by their connection.

The immune system creates a “language” by linking two ontogenetically different classes of molecules in a syntactical fashion. One class of molecules are the T and B cell receptors for antigens. These molecules are not inherited, but are somatically generated in each individual. The other class of molecules responsible for internal information processing is encoded in the individual’s germline.

Meaning, the chosen type of immune response, is the outcome of the concrete connection between the antigen subject and the germline predicate signals.

The transcription of the antigens into processed peptides embedded in a context of germline ancillary signals constitutes the functional language of the immune system. Despite the logic of clonal selection, the immune system does not respond to antigens as they are, but to abstractions of antigens-in-context, and does so in a dynamic manner across many tissue—global broadcasts.

Tumor Control

Nunney (1999) has explored cancer occurrence as a function of animal size, suggesting that in larger animals, whose lifespan grows as about the 4/10 power of their cell count, prevention of cancer in rapidly proliferating tissues becomes more difficult in proportion to size. Cancer control requires the development of additional mechanisms and systems to address tumorigenesis as body size increases—a synergistic effect of cell number and organism longevity. Nunney concludes that this pattern may represent a real barrier to the evolution of large, long-lived animals and predicts that those that do evolve have recruited additional controls over those of smaller animals to prevent cancer.

In particular, different tissues may have evolved markedly different tumor control strategies. All of these, however, are likely to be energetically expensive, permeated with different complex signaling strategies, and subject to a multiplicity of reactions to signals, including those related to psychosocial stress. Forlenza and Baum (2000) explore the effects of stress on the full spectrum of tumor control, ranging from DNA damage and control, to apoptosis, immune surveillance, and mutation rate. R. Wallace et al. (2003) argue that this elaborate tumor control strategy, in large animals, must be at least as cognitive as the immune system itself, one of its principal components: some comparison must be made with an internal picture of a healthy cell, and a choice made as to response, i.e., none, attempt DNA repair, trigger programmed cell death, engage in full-blown immune attack. This is, from the Atlan/Cohen perspective, the essence of cognition, and clearly involves the recruitment of a comprehensive set of cognitive subprocesses into a larger, highly tunable, dynamic structure.

Wound Healing

Following closely Mindwood et al. (2004), mammalian tissue repair is a series of overlapping events that begins immediately after wounding, as in Fig. 1.1 (Haggstrom 2012).

Fig. 1.1

Stages of wound healing on a logarithmic timescale, after Haggstrom (2012). Multiple subprocesses are recruited to the wound site in sequence, a global broadcast of cognitive phenomena slower, but no less sophisticated, than animal consciousness

Platelet aggregation forms a hemostatic plug and blood coagulation forms the provisional matrix. This dense cross-linked network of fibrin and fibronectin from blood acts to prevent excessive blood loss. Platelets release growth factors and adhesive proteins that stimulate the inflammatory response, entraining immune function, and inducing cell migration into the wound using the provisional matrix as a substrate. Wound cleaning is done by neutrophils, solubilizing debris, and monocytes that differentiate into macrophages and phagocytose debris. The macrophages release growth factors and cytokines that activate subsequent events. For cutaneous wounds, keratinocytes migrate across the area to reestablish the epithelial barrier. Fibroblasts then enter the wound to replace the provisional matrix with granulation tissue composed of fibronectin and collagen. As endothelial cells revascularize the damaged area, fibroblasts differentiate into myofibroblasts and contract the matrix to bring the margins of the wound together. The resident cells then undergo apoptosis, leaving collagen-rich scar tissue that is slowly remodeled in the following months. Wound healing, then, provides an ancient example of a global broadcast that recruits a set of cognitive processes, in the sense of Atlan and Cohen (1998). The mechanism, which may vary across taxa, is inherently tunable, addressing the signal of “excessive distortion” represented by a wound.

Gene Expression

A cognitive paradigm for gene expression has emerged, a model in which contextual factors determine the behavior of what must be characterized as a “reactive system,” not at all a deterministic—or even simple stochastic—mechanical process (Cohen 2006; Cohen and Harel 2007; Wallace and Wallace 2008, 2009, 2010).

O’Nuallain (2008) puts gene expression directly in the realm of complex linguistic behavior, for which context imposes meaning. He claims that the analogy between gene expression and language production is useful both as a fruitful research paradigm and also, given the relative lack of success of natural language processing by computer, as a cautionary tale for molecular biology. A relatively simple model of cognitive process as an information source permits use of Dretske’s (1994) insight that any cognitive phenomenon must be constrained by the limit theorems of information theory, in the same sense that sums of stochastic variables are constrained by the Central Limit Theorem. This perspective permits a new formal approach to gene expression and its dysfunctions, in particular suggesting new and powerful statistical tools for data analysis that could contribute to exploring both ontology and its pathologies. Wallace and Wallace (2009, 2010) apply the perspective, respectively, to infectious and chronic disease.

This approach is consistent with the broad context of epigenetics and epigenetic epidemiology. Jablonka and Lamb (1995, 1998), for example, argue that information can be transmitted from one generation to the next in ways other than through the base sequence of DNA. It can be transmitted through cultural and behavioral means in higher animals, and by epigenetic means in cell lineages. All of these transmission systems allow the inheritance of environmentally induced variation. Such Epigenetic Inheritance Systems are the memory systems that enable somatic cells of different phenotypes but identical genotypes to transmit their phenotypes to their descendants, even when the stimuli that originally induced these phenotypes are no longer present.

After much research and debate, this epigenetic perspective has received much empirical confirmation (e.g., Backdahl et al. 2009; Turner 2000; Jaenisch and Bird 2003; Jablonka 2004).

Foley et al. (2009) argue that epimutation is estimated to be 100 times more frequent than genetic mutation and may occur randomly or in response to the environment. Periods of rapid cell division and epigenetic remodeling are likely to be most sensitive to stochastic or environmentally mediated epimutation. Disruption of epigenetic profile is a feature of most cancers and is speculated to play a role in the etiology of other complex diseases including asthma, allergy, obesity, type 2 diabetes, coronary heart disease, autism spectrum and bipolar disorders, and schizophrenia.

Scherrer and Jost (2007a,b) explicitly invoke information theory in their extension of the definition of the gene to include the local epigenetic machinery, a construct they term the “genon.” Their central point is that coding information is not simply contained in the coded sequence, but is, in their terms, provided by the genon that accompanies it on the expression pathway and controls in which peptide it will end up. In their view the information that counts is not about the identity of a nucleotide or an amino acid derived from it, but about the relative frequency of the transcription and generation of a particular type of coding sequence that then contributes to the determination of the types and numbers of functional products derived from the DNA coding region under consideration.

The genon, as Scherrer and Jost describe it, is precisely a localized form of global broadcast linking cognitive regulatory modules to direct gene expression in producing the great variety of tissues, organs, and their linkages that comprise a living entity.

The proper formal tools for understanding phenomena that “provide” information—that are information sources—are the Rate Distortion Theorem and its zero error limit, the Shannon–McMillan Theorem.

Sociocultural Cognition

Humans are particularly noted for a hypersociality that inevitably enmeshes us all in group decisions and collective cognitive behavior within a social network, tinged by an embedding shared culture. For humans, culture is truly fundamental. Durham (1991), Richerson and Boyd (2006), Jablonka and Lamb (1995), and many others argue that genes and culture are two distinct but interacting systems of inheritance within human populations. Information of both kinds has influence, actual or potential, over behaviors, creating a real and unambiguous symmetry between genes and phenotypes on the one hand, and culture and phenotypes on the other. Genes and culture are best represented as two parallel lines or tracks of hereditary influence on phenotypes.

Much of hominid evolution can be characterized as an interweaving of genetic and cultural systems. Genes came to encode for increasing hypersociality, learning, and language skills. The most successful populations displayed increasingly complex structures that better aided in buffering the local environment (e.g., Bonner 1980).

Successful human populations seem to have a core of tool usage, sophisticated language, oral tradition, mythology, music, and decision-making skills focused on relatively small family/extended family social network groupings. More complex social structures are built on the periphery of this basic object (e.g., Richerson and Boyd 2006). The human species’ very identity may rest on its unique evolved capacities for social mediation and cultural transmission. These are particularly expressed through the cognitive decision making of small groups facing changing patterns of threat and opportunity, processes in which we are all embedded and all participate.

The emergent cognitive behavior of organizations has, in fact, long been explored under the label “distributed cognition.” As Hollan et al. (2000) describe,

The theory of distributed cognition, like any cognitive theory, seeks to understand the organization of cognitive systems. Unlike traditional theories, however, it extends the reach of what is considered cognitive beyond the individual to encompass interactions between people and with resources and materials in the environment. It is important from the outset to understand that distributed cognition refers to a perspective on all of cognition, rather than a particular kind of cognition…Distributed cognition looks for cognitive processes, wherever they may occur, on the basis of the functional relationships of elements that participate together in the process. A process is not cognitive simply because it happens in a brain, nor is a process noncognitive simply because it happens in the interactions between many brains…In distributed cognition one expects to find a system that can dynamically configure itself to bring subsystems into coordination to accomplish various functions.

Wallace and Fullilove (2008) apply several of the formal models explored below to institutions and other social structures, and Wallace (2006, 2008, 2009, 2010, 2017) uses them to analyze canonical and idiosyncratic failure modes of massively parallel real-time computing systems.

1.3 Animal Consciousness

Sergent and Dehaene (2004) describe the context surrounding consciousness studies as follows:

[A growing body of empirical work shows] large all-or-none changes in neural activity when a stimulus fails to be [consciously] reported as compared to when it is reported…[A] qualitative difference between unconscious and conscious processing is generally expected by theories that view recurrent interactions between distant brain areas as a necessary condition for conscious perception…One of these theories [that of Bernard Baars] has proposed that consciousness is associated with the interconnection of multiple areas processing a stimulus by a [dynamic] “neuronal workspace” within which recurrent connections allow long-distance communication and auto-amplification of the activation. Neuronal network simulations…suggest the existence of a fluctuating dynamic threshold. If the primary activation evoked by a stimulus exceeds this threshold, reverberation takes place and stimulus information gains access, through the workspace, to a broad range of [other brain] areas allowing, among other processes, verbal report, voluntary manipulation, voluntary action and long-term memorization. Below this threshold, however, stimulus information remains unavailable to these processes. Thus the global neuronal workspace theory predicts an all-or-nothing transition between conscious and unconscious perception…More generally, many non-linear dynamical systems with self-amplification are characterized by the presence of discontinuous transitions in internal state…

Thus Bernard Baars’ global workspace model of animal consciousness sees the phenomenon as a dynamic array of unconscious cognitive modules that unite to become a global broadcast having a tunable perception threshold not unlike a theater spotlight, but whose range of attention is constrained by embedding contexts (Baars 1988, 2005). Baars and Franklin (2003) describe these matters as follows:

1. 1.

2. 2.

3. 3.

4. 4.

5. 5.

6. 6.

7. 7.

The basic mechanism emerges from a relatively simple application of the asymptotic limit theorems of information theory, once a broad range of unconscious cognitive processes is recognized as inherently characterized by information sources—generalized languages (Wallace 2000, 2005, 2007). This permits mapping physiological unconscious cognitive modules onto an abstract network of interacting information sources, allowing a simplified mathematical attack that, in the presence of sufficient linkage—crosstalk, permits rapid, shifting, global broadcasts in response to sufficiently large impinging signals. The topology of that broadcast is tunable, depending on the spectrum of distortion measure and other limits imposed on the system of interest.

While the mathematical description of consciousness is itself relatively simple, the evolutionary trajectories leading to its emergence seem otherwise. Here we argue that this is not the case, and that physical restrictions on the availability of metabolic free energy provide sufficient conditions for the emergence, not only of consciousness, but also of a spectrum of analogous “global” broadcast phenomena acting across a variety of biological scales of space, time, and levels of organization.

The argument is, in a sense, an inversion of Gould and Lewontin’s (1979) famous essay “The Spandrels of San Marco and the Panglossian Paradigm: A Critique of the Adaptationist Programme.” Spandrels are the triangular sectors of the intersecting arches that support a cathedral roof—simple byproducts of the need for arches—and their occurrence is in no way fundamental to the construction of a cathedral. Crosstalk between “low level” cognitive biological modules is a similar inessential by product that evolutionary process has exapted to construct the dynamic global broadcasts of consciousness and a spectrum of roughly analogous physiological phenomena: Evolution built many new arches from a single spandrel.

A formal overview, much like Onsager’s nonequilibrium thermodynamics, leads to dynamic “regression models” that should be useful for data analysis.

1.4 Cognition as “Language”

Atlan and Cohen (1998) argue above, in the context of the immune system, that cognitive function involves comparison of a perceived signal with an internal, learned or inherited picture of the world, and then choice of one response from a much larger repertoire of possible responses. That is, cognitive pattern recognition-and-response proceeds by an algorithmic combination of an incoming external sensory signal with an internal ongoing activity—incorporating the internalized picture of the world—and triggering an appropriate action based on a decision that the pattern of sensory activity requires a response.

Incoming sensory input is thus mixed in an unspecified but systematic manner with a pattern of internal ongoing activity to create a path of combined signals x = (a0, a1, …, an , …). Each ak thus represents some functional composition of the internal and the external. An application of this perspective to a standard neural network is given in Wallace (2005, p. 34).

This path is fed into a similarly unspecified, decision function, h, generating an output h(x) that is an element of one of two disjoint sets B0 and B1 of possible system responses. Let

Assume a graded response, supposing that if

![]()

the pattern is not recognized, and if

![]()

the pattern is recognized , and some action bj , k + 1 ≤ j ≤ m takes place.

Formal interest focuses on paths x triggering pattern recognition-and-response: given a fixed initial state a0, examine all possible subsequent paths x beginning with a0 and leading to the event h(x) ∈ B1. Thus h(a0, …, aj ) ∈ B0for all 0 ≤ j < m, but h(a0, …, am ) ∈ B1.

For each positive integer n, let N(n) be the number of high probability paths of length n that begin with some particular a0 and lead to the condition h(x) ∈ B1. Call such paths “meaningful,” assuming that N(n) will be considerably less than the number of all possible paths of length n leading from a0 to the condition h(x) ∈ B1.

Note that identification of the “alphabet” of the states aj , Bk may depend on the proper system “coarse graining” in the sense of symbolic dynamics (Beck and Schlogl 1995).

Combining algorithm, the form of the function h and the details of grammar and syntax are all unspecified in this model. The assumption permitting inference on necessary conditions constrained by the asymptotic limit theorems of information theory is that the finite limit

![$$\displaystyle{ H \equiv \lim _{n\rightarrow \infty }\frac{\log [N(n)]} {n} }$$](computational-psychiatry.files/image006.png)

(1.1)

both exists and is independent of the path x.

Call such a pattern recognition-and-response cognitive process ergodic. Not all cognitive processes are likely to be ergodic, implying that H, if it indeed exists at all, is path dependent, although extension to nearly ergodic processes , in a certain sense, seems possible (e.g., Wallace 2005, pp. 31–32).

Invoking the spirit of the Shannon–McMillan Theorem, it is possible to define an adiabatically, piecewise stationary, ergodic information source X associated with stochastic variates Xj having joint and conditional probabilities P(a0, …, an ) and P(an | a0, …, an−1) such that appropriate joint and conditional Shannon uncertainties satisfy the classic relations (Cover and Thomas 2006)

![$$\displaystyle{ H[\mathbf{X}] =\lim _{n\rightarrow \infty }\frac{\log [N(n)]} {n} =\lim _{n\rightarrow \infty }H(X_{n}\vert X_{0},\ldots,X_{n-1}) =\lim _{n\rightarrow \infty }\frac{H(X_{0},\ldots,X_{n})} {n}. }$$](computational-psychiatry.files/image007.png)

(1.2)

This information source is defined as dual to the underlying ergodic cognitive process, in the sense of Wallace (2000, 2005, 2007).

“Adiabatic” means that, when the information source is parameterized according to some appropriate scheme, within continuous “pieces,” changes in parameter values take place slowly enough so that the information source remains as close to stationary and ergodic as needed to make the fundamental limit theorems work. “Stationary” means that probabilities do not change in time, and “ergodic” (roughly) that cross-sectional means converge to long-time averages. Between “pieces” one invokes various kinds of phase change formalism, for example, renormalization theory in cases where a mean field approximation holds (Wallace 2005), or variants of random network theory where a mean number approximation is applied.

Extension of the theory to “nonergodic” information sources is possible, given some considerable mathematical overhead, e.g., Wallace (2005, pp. 31–32).

Recall that the Shannon uncertainties H(…) are cross-sectional law-of-large-numbers sums of the form − ∑ k P k log[Pk ], where the Pk constitute a probability distribution. See Cover and Thomas (2006), Ash (1990), or Khinchin (1957) for the standard details.

A formal equivalence class algebra can be constructed by choosing different origin points, a0, and defining the equivalence of two states, am , an , by the existence of high probability meaningful paths connecting them to the same origin point. Disjoint partition by equivalence class, analogous to orbit equivalence classes for dynamical systems, defines the vertices of a network of cognitive dual languages. Each vertex then represents a different information source dual to a cognitive process. This is not a representation of a neural network as such, or of some circuit in silicon. It is, rather, an abstract set of “languages” dual to the set of cognitive biological processes.

This structure generates a groupoid, leading to complicated algebraic properties summarized in the Mathematical Appendix.

1.5 No Free Lunch

Given a set of biological cognitive modules that become linked to solve a problem—e.g., riding a bicycle in heavy traffic, followed by localized wound healing—the famous “no free lunch” theorem of Wolpert and MacReady (1995, 1997) illuminates the next step in the argument. As English (1996) states the matter,

…Wolpert and Macready…have established that there exists no generally superior [computational] function optimizer. There is no “free lunch” in the sense that an optimizer “pays” for superior performance on some functions with inferior performance on others…gains and losses balance precisely, and all optimizers have identical average performance…[That is] an optimizer has to “pay” for its superiority on one subset of functions with inferiority on the complementary subset…

Another way of stating this conundrum is to say that a computed solution is simply the product of the information processing of a problem, and, by a very famous argument, information can never be gained simply by processing. Thus a problem X is transmitted as a message by an information processing channel, Y, a computing device, and recoded as an answer. By the “tuning theorem” argument of the Mathematical Appendix, there will be a channel coding of Y which, when properly tuned, is itself most efficiently “transmitted,” in a purely formal sense, by the problem—the “message” X. In general, then, the most efficient coding of the transmission channel, that is, the best algorithm turning a problem into a solution, will necessarily be highly problem-specific. Thus there can be no best algorithm for all sets of problems, although there will likely be an optimal algorithm for any given set.

Indeed, something much like this result is well known, using another description. Shannon (1959) wrote

There is a curious and provocative duality between the properties of [an information] source with a distortion measure and those of a channel. This duality is enhanced if we consider channels in which there is a cost associated with the different letters…Solving this problem corresponds, in a sense, to finding a source that is right for the channel and the desired cost…In a somewhat dual way, evaluating the rate distortion function for a source…corresponds to finding a channel that is just right for the source and allowed distortion level.

From the no free lunch argument, it is clear that different challenges facing an entity must be met by different arrangements of cooperating “low level” cognitive modules. It is possible to make a very abstract picture of this phenomenon, not based on anatomy, but rather on the network of linkages between the information sources dual to the physiological and learned unconscious cognitive modules (UCM). That is, the remapped network of lower level cognitive modules is reexpressed in terms of the information sources dual to the UCM. Given two distinct problems classes (e.g., riding a bicycle vs. wound healing), there must be two different “wirings” of the information sources dual to the available physiological UCM, as in Fig. 1.2, with the network graph edges measured by the amount of information crosstalk between sets of nodes representing the dual information sources. A more formal treatment of such coupling can be given in terms of network information theory (Cover and Thomas 2006), particularly incorporating the effects of embedding contexts, implied by the “external” information source Z—signals from the environment.

Fig. 1.2

By the no free lunch theorem, two markedly different problems facing an organism will be optimally solved by two different linkages of available lower level cognitive modules—characterized now by their dual information sources Xj —into different temporary networks of working structures, here represented by crosstalk among those sources rather than by the physiological UCM themselves. The embedding information source Z represents the influence of external signals whose effects can be at least formally accounted for by network information theory

The possible expansion of a closely linked set of information sources dual to the UCM into a global broadcast—the occurrence of a kind of “spandrel”—depends, in this model, on the underlying network topology of the dual information sources and on the strength of the couplings between the individual components of that network.

For random networks the results are well known (Erdos and Renyi 1960). Following the review by Spenser (2010) closely (see, e.g., Boccaletti et al. 2006, for more detail), assume there are n network nodes and e edges connecting the nodes, distributed with uniform probability—no nonrandom clustering. Let G[n, e] be the state when there are e edges. The central question is the typical behavior of G[n, e] as echanges from 0 to (n − 2)! ∕2. The latter expression is the number of possible pair contacts in a population having n individuals. Another way to say this is to let G(n, p) be the probability space over graphs on n vertices where each pair is adjacent with independent probability p. The behaviors of G[n, e] and G(n, p) where e = p(n − 2)! ∕2 are asymptotically the same.

For the simple random case, parameterize as p = c∕n. The graph with n∕2 edges then corresponds to c = 1. The essential finding is that the behavior of the random network has three sections:

1. 1.

2. 2.

3. 3.

Then

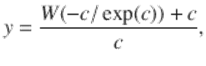

(1.4)

where W is the Lambert W function.

The solid line in Fig. 1.3 shows y as a function of c, representing the fraction of network nodes that are incorporated into the interlinked giant component—a de-facto global broadcast for interacting UCM. To the left of c = 1 there is no giant component , and large-scale cognitive process is not possible.

Fig. 1.3

Fraction of network nodes in the giant component as a function of the crosstalk coupling parameter c. The solid line represents a random graph, the dotted line a star-of-stars-of-stars network in which all nodes are interconnected, showing that the dynamics of giant component emergence are highly dependent on an underlying network topology that, for UCM, may itself be tunable. For the random graph, a strength of c < 1 precludes emergence of a larger-scale “global” broadcast

The dotted line, however, represents the fraction of nodes in the giant component for a highly nonrandom network, a star-of-stars-of-stars (SoS) in which every node is directly or indirectly connected with every other one. For such a topology there is no threshold, only a single giant component, showing that the emergence of a giant component in a network of information sources dual to the UCM is dependent on a network topology that may itself be tunable. A generalization of this result follows from an index theorem argument below.

1.6 Multiple Broadcasts, Punctuated Detection

The random network development above is predicated on there being a variable average number of fixed-strength linkages between components. Clearly, the mutual information measure of crosstalk is not inherently fixed, but can continuously vary in magnitude. This suggests a parameterized renormalization. In essence, the modular network structure linked by mutual information interactions has a topology depending on the degree of interaction of interest.

Define an interaction parameter ω, a real positive number, and look at geometric structures defined in terms of linkages set to zero if mutual information is less than, and “renormalized” to unity if greater than, ω. Any given ωwill define a regime of giant components of network elements linked by mutual information greater than or equal to it.

Now invert the argument: A given topology for the giant component will, in turn, define some critical value, ω C , so that network elements interacting by mutual information less than that value will be unable to participate, i.e., will be locked out and not be “consciously” perceived. Thus ω is a tunable, syntactically dependent, detection limit that depends critically on the instantaneous topology of the giant component of linked cognitive modules defining the global broadcast. That topology is, fundamentally, the basic tunable syntactic filter across the underlying modular structure, and variation in ω is the only one aspect of a much more general topological shift. Further analysis can be given in terms of a topological rate distortion manifold (Glazebrook and Wallace 2009a,b).

There is considerable empirical evidence from fMRI brain imaging and many other experiments to show that individual animal consciousness—restricted by necessity of a time constant near 100 ms—involves a single, shifting and tunable, global broadcast, a matter leading to the phenomenon of inattentional blindness. Multiple cognitive submodules within systems not constrained to the 100 ms time range, for example, institutions—individuals, departments, formal and informal workgroups—by contrast, can do more than one thing, and indeed, are usually required to multitask. Clearly, then, multiple global broadcasts—indexed by a set Ω = {ω 1, …, ω n }—lessen the probability of inattentional blindness, if there is time to support them, but do not eliminate it, and introduce critical failure modes related to the degradation of information transmitted between global broadcasts.

Thus Ω represents a set of crosstalk information measures between cognitive submodules, each associated with its own tunable giant component having its own special topology.

Again, although animal consciousness, with its 100 ms time constant, seems restricted to a single tunable global broadcast, it is clear that slower physiological global broadcast analogs would permit individual subsystems, or localized sets of such subsystems, to engage in more than one global broadcast at a time, to multitask, in the same sense that workgroups within an institution will usually be given more than one task at a time. Thus the immune system can be expected to simultaneously engage in wound healing, attack on invading microorganisms, neuroimmuno dialog, and routine tissue maintenance tasks (Cohen 2000).

1.7 Metabolic Constraints

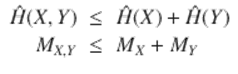

The information sources dual to the linked unconscious cognitive modules represented in Fig. 1.2 are not independent, but are correlated, so that a joint information source can be defined having the properties

(1.5)

This is the information chain rule (e.g., Cover and Thomas 2006), and has profound implications. Feynman (2000) describes in great detail how information and free energy have an inherent duality . Feynman, in fact, defines information precisely as the free energy needed to erase a message. The argument is surprisingly direct (e.g., Bennett 1988), and for very simple systems it is easy to design a small (idealized) machine that turns the information within a message directly into usable work—free energy. Information is a form of free energy and the construction and transmission of information within living things—the physical instantiation of information—consumes metabolic free energy, with inevitable and considerable losses via the second law of thermodynamics.

Suppose an intensity of metabolic free energy is associated with each information source H(X, Y ), H(X), H(Y ), e.g., rates MX, Y, MX , MY .

Although information is a form of free energy, in the sense of Feynman (2000) and Bennett (1988), there is a massive entropic loss in its physical expression, so that the probability distribution of a source uncertainty H can be written as

![$$\displaystyle{ P[H] = \frac{\exp [-H/\kappa M]} {\int \exp [-H/\kappa M]dH} }$$](computational-psychiatry.files/image012.png)

(1.6)

assuming κ is very small.

To first order,

![$$\displaystyle{ \hat{H}=\int HP[H]dH \approx \kappa M }$$](computational-psychiatry.files/image013.png)

(1.7)

and, using Eq. (1.5),

(1.8)

Thus, as a consequence of the information chain rule, allowing crosstalk consumes a lower rate of metabolic free energy than isolating information sources. A more detailed calculation is given in Sect. 11.3 of the Mathematical Appendix. That is, in general, it takes more metabolic free energy to isolate a set of cognitive phenomena than it does to allow them to engage in crosstalk, a signal interaction that, under typical electrical engineering circumstances, grows as the inverse square of the separation between circuits. This is a well-known problem in electrical engineering that can consume considerable attention and other resources for proper address.

The global broadcast mechanisms of consciousness and its slower physiological generalizations make an arch of this spandrel, using the lowered metabolic free energy requirement of crosstalk interaction between low level cognitive modules as the springboard for launching (sometimes) rapid, tunable, more highly correlated, multiple global broadcasts that link those modules to solve problems.

1.8 Environmental Signals

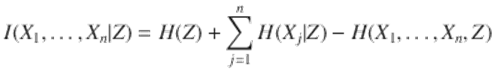

Lower level cognitive modules operate within larger, highly structured, environmental signals and other constraints whose regularities may also have a recognizable grammar and syntax, represented in Fig. 1.2 by an embedding information source Z. Under such a circumstance the splitting criterion for three jointly typical sequences is given by the classic relation of network information theory (Cover and Thomas 2006, Theorem 15.2.3)

![]()

(1.9)

that generalizes as

(1.10)

More complicated multivariate typical sequences are treated much the same (e.g., El Gamal and Kim 2010, pp. 2–26). Given a basic set of interacting information sources (X 1, …, Xk ) that one partitions into two ordered sets ![]() and

and ![]() , then the splitting criterion becomes

, then the splitting criterion becomes ![]() . Extension to a greater number of ordered sets is straightforward.

. Extension to a greater number of ordered sets is straightforward.

Then the joint splitting criterion—I, H above—however it may be expressed as a composite of the underlying information sources and their interactions, satisfies a relation like the first expression in Eq. (1.2), where N(n) is the number of high probability jointly typical paths of length n, and the theory carries through, now incorporating the effects of external signals as the information source Z.

1.9 Dynamic “Regression Models”

Given the splitting criteria I(X1, …, Xn | Z) or ![]() as above, the essential point is that these are the limit, for large n, of the expression log[N(n)]∕n, where N(n) is the number of jointly typical high probability paths of the interacting information sources of length n. Again, as Feynman (2000) argues at great length, information is simply another form of free energy, and its dynamics can be expressed using a formalism similar to Onsager’s nonequilibrium thermodynamics (de Groot and Mazur 1984). This is particularly apt in view of the enormous levels of free energy needed to physically instantiate information transmission.

as above, the essential point is that these are the limit, for large n, of the expression log[N(n)]∕n, where N(n) is the number of jointly typical high probability paths of the interacting information sources of length n. Again, as Feynman (2000) argues at great length, information is simply another form of free energy, and its dynamics can be expressed using a formalism similar to Onsager’s nonequilibrium thermodynamics (de Groot and Mazur 1984). This is particularly apt in view of the enormous levels of free energy needed to physically instantiate information transmission.

First, the physical model. Let F(K) be the free energy density of a physical system, K the normalized temperature, V the volume, and Z(K, V ) the partition function defined from the Hamiltonian characterizing energy states Ei . Then

![$$\displaystyle{ Z(V,K) \equiv \sum _{i}\exp [-E_{i}(V )/K] \equiv \exp [F/K] }$$](computational-psychiatry.files/image021.png)

(1.11)

so that

![$$\displaystyle{F(K) =\lim _{V \rightarrow \infty }- K\frac{\log [Z(V,K))} {V } \equiv \frac{\log [\hat{Z} (K,V )]} {V }.}$$](computational-psychiatry.files/image022.png)

If a nonequilibrium physical system is parameterized by a set of variables {Ki }, then the empirical Onsager equations are defined in terms of the gradient of the entropy S ≡ F − ∑j K j dF∕dKj —the Legendre transform of F—as

(1.12)

where the Li, jare empirical constants. For a physical system having microreversibility, Li, j = Lj, i. For an information source where, for example, “the” has a much different probability than “eht,” no such microreversibility is possible, and no “reciprocity relations” can apply .

For stochastic systems this generalizes to the set of stochastic differential equations

![$$\displaystyle\begin{array}{rcl} dK_{t}^{j}& =& \sum _{ i}[L_{j,i}(t,\ldots \partial S/\partial K^{i}\ldots )dt +\sigma _{ j,i}(t,\ldots \partial S/\partial K^{i})dB_{ t}^{i}] \\ & =& L(t,K^{1},\ldots,K^{n})dt +\sum _{ i}\sigma (t,K^{1},\ldots,K^{n})dB_{ t}^{i} {}\end{array}$$](computational-psychiatry.files/image024.png)

(1.13)

where terms have been collected and expressed in the driving parameters. The dBt i represent different kinds of “noise” whose characteristics are usually expressed by their quadratic variation. See any standard text for definitions, examples, and details (e.g., Protter 1990).

For the splitting criteria I(X 1, …, Xn | Z) or ![]() , the role of information as a form of free energy and the corresponding limit in log[N(n)]∕n make it possible to define entropy-analogs as

, the role of information as a form of free energy and the corresponding limit in log[N(n)]∕n make it possible to define entropy-analogs as

![$$\displaystyle\begin{array}{rcl} & & S \equiv I(\ldots K^{i}\ldots ) -\sum _{ j}K^{j}\partial I/\partial K^{j}, \\ & & S \equiv H[X(\mathcal{J}\vert \mathcal{J}^{{\prime}})] -\sum _{ j}K^{j}\partial H[X(\mathcal{J}\vert \mathcal{J}^{{\prime}})]/\partial K^{j}, \\ & & S \propto M_{\mathcal{J}\vert \mathcal{J}^{{\prime}}}-\sum _{j}K^{j}\partial M_{ \mathcal{J}\vert \mathcal{J}^{{\prime}}}/\partial K^{j} {}\end{array}$$](computational-psychiatry.files/image025.png)

(1.14)

where the last relation invokes the embedding metabolic free energies that instantiate the actual mechanisms by which information is transmitted.

The basic dynamic “regression equations” for the system of Figs. 1.2 and 1.3, driven by a set of external “sensory” and other, internal, signal parameters K = (K1, …, Kn ) that may be measured by the information source uncertainty of other information sources, are then precisely the set of Eq. (1.13) above.

That is, the underlying picture becomes reversed, and the actual driving metabolic free energy measures MX are now seen as indexed by the source uncertainties H[X]. The different MX become each others’ embedding environments in an analog to coevolutionary dynamics.

Several features emerge directly from invoking such a coevolutionary approach.

1. 1.

2. 2.

3. 3.

This represents a highly recursive phenomenological set of stochastic differential equations (Zhu et al. 2007), but operates in a dynamic rather than static manner. The objects of this dynamical system are equivalence classes of information sources, rather than simple “stationary states” of a dynamical or reactive chemical system. The necessary conditions of the asymptotic limit theorems of communication theory have beaten the mathematical thicket back one layer.

Third, as Champagnet et al. (2006) note, shifts between the quasi-equilibria of a coevolutionary system can be addressed by the large deviations formalism. The issue of dynamics drifting away from trajectories predicted by the canonical equation can be investigated by considering the asymptotic of the probability of “rare events” for the sample paths of the diffusion.

“Rare events” are the diffusion paths drifting far away from the direct solutions of the canonical equation. The probability of such rare events is governed by a large deviation principle: when a critical parameter (designated ε) goes to zero, the probability that the sample path of the diffusion is close to a given rare path ϕ decreases exponentially to 0 with rate ![]() , where the “rate function”

, where the “rate function” ![]() can be expressed in terms of the parameters of the diffusion.

can be expressed in terms of the parameters of the diffusion.

This result can be used to study long-time behavior of the diffusion process when there are multiple attractive singularities. Under proper conditions the most likely path followed by the diffusion when exiting a basin of attraction is the one minimizing the rate function ![]() over all the appropriate trajectories. The time needed to exit the basin is of the order

over all the appropriate trajectories. The time needed to exit the basin is of the order ![]() where

where ![]() is a quasi-potential representing the minimum of the rate function

is a quasi-potential representing the minimum of the rate function ![]() over all possible trajectories.

over all possible trajectories.

An essential fact of large deviations theory is that the rate function ![]() that Champagnat et al. invoke can be expressed as a kind of formal entropy measure, that is, having the canonical form

that Champagnat et al. invoke can be expressed as a kind of formal entropy measure, that is, having the canonical form

(1.15)

for some probability distribution. This result goes under a number of names: Sanov’s Theorem, Cramer’s Theorem, the Gartner–Ellis Theorem, the Shannon–McMillan Theorem, and so forth (Dembo and Zeitouni 1998).

These arguments are very much in the direction of Eq. (1.13), now seen as subject to internally driven large deviations that can themselves be described as information sources, providing ![]() -parameters that can trigger punctuated shifts between quasi-stable modes. Thus both external signals, characterized by the information source Z, and internal “ruminations,” characterized by an information source

-parameters that can trigger punctuated shifts between quasi-stable modes. Thus both external signals, characterized by the information source Z, and internal “ruminations,” characterized by an information source ![]() , can provide K-parameters that serve to drive the system to different quasi-equilibrium “conscious attention states” in a highly punctuated manner, if they are of sufficient magnitude to overcome the topological renormalization ω-constraints described in Sect. 1.6.

, can provide K-parameters that serve to drive the system to different quasi-equilibrium “conscious attention states” in a highly punctuated manner, if they are of sufficient magnitude to overcome the topological renormalization ω-constraints described in Sect. 1.6.

A schematic of these ideas has become common currency in systems biology, and Fig. 1.4, adapted from Fig. 1 of Kitano (2004), provides a caricature, including several possible modes. Taking a two-dimensional parameterization, so that there are two Kj , different basins of attraction in parameter space show system response to perturbation: (A) Return to a periodic (or chaotic) attractor. (B) Transition to a new attractor. (C) Stochastic process or external information source ![]() influences the trajectory. (D) Return to a point attractor. (E) An unstable random walk to another basin of attraction. This should be compared with Fig. 1.2 that represents two of the different network topologies of the dual information sources behind the parameterization.

influences the trajectory. (D) Return to a point attractor. (E) An unstable random walk to another basin of attraction. This should be compared with Fig. 1.2 that represents two of the different network topologies of the dual information sources behind the parameterization.

Fig. 1.4

Adapted from Fig. 1 of Kitano (2004). Using a two-dimensional schematic, different basins of attraction in parameter space show system response to perturbation. (A) Return to a periodic (or chaotic) attractor. (B) Transition to a new attractor. (C) Stochastic process or external information source ![]() influences the trajectory. (D) Return to a point attractor. (E) Unstable random walk to another basin of attraction. Compare with Fig. 1.2 which represents the different network topologies of the dual information sources that underlie the parameterization

influences the trajectory. (D) Return to a point attractor. (E) Unstable random walk to another basin of attraction. Compare with Fig. 1.2 which represents the different network topologies of the dual information sources that underlie the parameterization

Figure 1.4 then presents, in parameter space, several of the different “no free lunch” arrangements of unconscious cognitive modules, as in Fig. 1.2, that unite to address problems facing the organism.

More generally, however, following the topological arguments of Sect. 1.6, setting the expectation of Eq. (1.13) to zero generates an index theorem (Hazewinkel 2002), in the sense of Atiyah and Singer (1963). Such an object relates analytic results—the solutions to the equations—to an underlying set of topological structures that are eigenmodes of a complicated Ω-network geometric operator whose spectrum represents the possible multiple global broadcast states of the system. This structure and its dynamics do not really have simple mechanical or electrical system analogs.

Index theorems, in this context, instantiate relations between “conserved” quantities—here, the quasi-equilibria of basins of attraction in parameter space—and underlying topological form—here, the cognitive network conformations of Fig. 1.2. Section 1.4, however, described how that network was itself defined in terms of equivalence classes of meaningful paths that, in turn, defined groupoids, a significant generalization of the group symmetries more familiar to physicists.

The approach, then, in a sense—via the groupoid construction—generalizes the famous relation between group symmetries and conservation laws uncovered by E. Noether that has become the central foundation of modern physics (Byers 1999). Thus this work proposes a kind of Noetherian (NER-terian) statistical dynamics of the living state. The saving grace of the method is that it represents the fitting of dynamic regression-like statistical models based on the asymptotic limit theorems of information theory to data, and does not presume to be a “real” picture of the underlying systems and their time behaviors: biology is not relativity theory.

As with simple fitted regression equations, actual scientific inference is done most often by comparing the same systems under different, and different systems under the same, conditions. Statistics is not science, and one can easily imagine the necessity of “nonparametric” or “non-Noetherian” models.

1.10 Phase Transition Approaches

Basic Ideas

Given sufficient available metabolic free energy, it is possible to refine the topological “renormalization” arguments of Sect. 1.6 in terms of the joint uncertainty measure driven by changes in the coupling parameter ω. Joint dynamic trajectories are assumed constrained by crosstalk, as indexed by ω, so that the probability distribution density function of a joint information source representing linked cognitive submodules is given, in a first approximation, by the standard expression for the Gibbs distribution

![$$\displaystyle{ P[H(X_{1},\ldots,X_{n})] = \frac{\exp [-H/\kappa \omega ]} {\int \exp [-H/\kappa \omega ]dH}, }$$](computational-psychiatry.files/image034.png)

(1.16)

where κ is a scaling constant. Roughly, increasing the crosstalk measure ω permits higher “symmetries” by allowing more interaction.

The Gibbs distribution may not work well for systems whose dynamics are thermodynamically open, and it is possible to generalize the treatment, using an adiabatic approximation in which the dynamics remain “close enough” to a form in which the mathematical theory can work, adapting standard phase transition formalism for shifts between adiabatic realms. In particular, rather than using exponential terms, one might well use any functional form g(H, ω) such that the integral over H converges.

A partition function-analog can be defined as

![$$\displaystyle{ Z(\kappa \omega ) =\int \exp [-H/\kappa \omega ]dH }$$](computational-psychiatry.files/image035.png)

(1.17)

Now define a “free energy,” F, over the full set of possible dynamic trajectories as constrained by ω, as

![$$\displaystyle{ \exp [-F/\kappa \omega ] \equiv \int \exp [-H/\kappa \omega ]dH, }$$](computational-psychiatry.files/image036.png)

(1.18)

so that

![]()

(1.19)

This is to be taken as a Morse Function, in the sense of the Mathematical Appendix. Other—essentially similar—Morse Functions may perhaps be defined on this system, having a more “natural” interpretation from information theory.

Argument is now by abduction from statistical physics (Landau and Lifshitz 2007; Pettini 2007). The Morse Function F is seen as constrained by the crosstalk linkage parameter ω in a manner that allows application of Landau’s theory of punctuated phase transition in terms of groupoid, rather than group, symmetries.

Recall Landau’s perspective on phase transition (Pettini 2007). The essence of his insight was that certain physical phase transitions took place in the context of a significant symmetry change, with one phase being more symmetric than the other. A symmetry is lost in the transition, i.e., spontaneous symmetry breaking . The greatest possible set of symmetries being that of the Hamiltonian describing the energy states. Usually, states accessible at lower temperatures will lack the symmetries available at higher temperatures, so that the lower temperature state is less symmetric, and transitions can be highly punctuated.

Here, dynamic process is characterized in terms of groupoid, rather than group, symmetries, and the argument by abduction is essentially similar: Increasing crosstalk—rising ω—will allow richer interactions between the interacting information sources, and will do so in a highly punctuated manner.

Kadanoff Theory

Given F as a free energy analog, a mathematical treatment of transitions between adiabatic realms is of interest. Define a characteristic “length,” r, on the network of interacting information sources, as more fully described below. It is then possible to apply renormalization symmetries in terms of the “clumping” transformation, so that, for clumps of size R, in an external “field” of strength J (that can be set to 0 in the limit), in the usual manner (e.g., Wilson 1971),

![$$\displaystyle\begin{array}{rcl} F[\omega (R),J(R)]& =& f(R)F[\omega (1),J(1)] \\ \chi (\omega (R),J(R))& =& \frac{\chi (\omega (1),J(1))} {R} {}\end{array}$$](computational-psychiatry.files/image038.png)

(1.20)

where χ is a characteristic correlation length.

As will be described below, many “biological” renormalizations, f(R), are possible that lead to a number of quite different universality classes for phase transition.

In order to define the metric r, impose a topology on the system of interacting information sources, so that, near a particular “language” A defining some uncertainty measure H there is (in an appropriate sense) an open set U of closely similar languages ![]() , such that

, such that ![]() .

.

Since the information sources are “similar,” for all pairs of languages ![]() in U, it is possible to:

in U, it is possible to:

1. 1.

2. 2.

3. 3.

Other Versions of the Kadanoff Model

Clearly, other ways of constructing a metric seem possible, as are other ways of renormalizing. For example, Wallace (2005) uses a more direct argument in which the richness of the joint uncertainty across the network of interacting cognitive modules itself grows according to something like Eq. (1.20). While Wilson’s (1971) calculation necessarily had a volume functional dependence, f(R) = R3, source uncertainty is likely to “top out” and not increase without limit, or at least not so rapidly, and Wallace (2005) explores the influence of different forms of f(R): Rδ , mlog(R) + 1, exp[m(R − 1)∕R]. Surprisingly, a variety of functional forms for f(R) carries through using this technique.

1.11 The Rate Distortion Approach

Some Formalism

A different perspective regarding cognitive global broadcasts emerges through an index theorem attack based on an Onsager-like nonequilibrium treatment of the disjunction between intent and impact of cognitive subprocesses, in the context of “perturbations” that may range from incoming sensory signals to pathogens, developing tumors, and the like. This is done via the Rate Distortion Theorem, leading to a conceptual simplification of previous arguments.

Many real-time biological problems are inherently rate distortion problems, in the same sense that the retina is a tool for projection of complex visual stimuli down onto a “simpler” neural substrate, and it is possible to reformulate the underlying theory from that perspective. The implementation of a complex cognitive structure, say a sequence of control orders generated by some regulatory dual information source Y, having output yn = y1, y2, …is “digitized” in terms of the observed behavior of the regulated system, say the sequence bn = b1, b2, …. The bi are thus what happens in real time, the actual impact of the cognitive structure on its embedding environment. Assume each b n is then deterministically retranslated back into a reproduction of the original control signal, ![]() .

.

Define a distortion measure ![]() that compares the original to the retranslated path. See Cover and Thomas (2006) for example. Suppose that with each path yn and bn -path retranslation into the y-language, denoted

that compares the original to the retranslated path. See Cover and Thomas (2006) for example. Suppose that with each path yn and bn -path retranslation into the y-language, denoted ![]() , there are associated individual, joint, and conditional probability distributions

, there are associated individual, joint, and conditional probability distributions ![]() .

.

The average distortion is defined as

(1.22)

It is possible, using the distributions given above, to define the information transmitted from the incoming Y to the outgoing ![]() process using the Shannon source uncertainty of the strings:

process using the Shannon source uncertainty of the strings:

![]()

If there is no uncertainty in Y, given the retranslation ![]() , then no information is lost, and the regulated system is perfectly under control.

, then no information is lost, and the regulated system is perfectly under control.

In general, this will not be true.

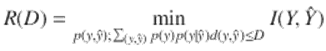

The information Rate Distortion Function R(D) for a source Y with a distortion measure ![]() is defined as

is defined as

(1.23)

Cover and Thomas (2006) provide more detail.

The minimization is over all conditional distributions ![]() for which the joint distribution

for which the joint distribution ![]() satisfies the average distortion constraint (i.e., average distortion ≤ D).

satisfies the average distortion constraint (i.e., average distortion ≤ D).

The Rate Distortion Theorem states that R(D) is the minimum necessary rate of information transmission—essentially minimum channel capacity—that ensures the transmission does not exceed average distortion D (Cover and Thomas 2006). The Rate Distortion Function has been calculated for a number of systems, using Lagrange multiplier methods. Cover and Thomas (2006) show that R(D) is necessarily a decreasing convex function of D, that is, always a reverse J-shaped curve. This is a critical observation, since convexity is an exceptionally powerful mathematical condition (Ellis 1985; Rockafellar 1970).

Recall, now, the classic relation between information source uncertainty and channel capacity. First,

![]()

(1.24)

where H is the uncertainty of the source X and C the channel capacity. Recall that C is defined according to the relation

![]()

(1.25)

where P(X) is the probability distribution of the message chosen so as to maximize the rate of information transmission along a channel Y.

A Simple Model

The Rate Distortion Function places limits on information source uncertainty. Thus distortion measures can drive information system dynamics. That is, the Rate Distortion Function itself has a homological relation to free energy density.

The motivation for this approach is the observation that a Gaussian channel with noise variance σ 2 and zero mean has a Rate Distortion Function R(D) = 1∕2log[σ 2∕D] using the squared distortion measure. Defining a “Rate Distortion entropy” as the Legendre transform of the Rate Distortion Function

![]()

the simplest possible nonequilibrium Onsager equation (de Groot and Mazur 1984) is

![]()

where t is the time and μ is a diffusion coefficient. By inspection,

![]()

very precisely the solution to the diffusion equation.

Some thought will suggest this correspondence reduction is of singular importance, and it is now possible to argue upward from it in both scale and complexity.

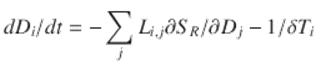

Suppose the relation between system challenge and system response—the manner in which physiological activities of the cognitive systems of interest more-or-less accurately reflect what is called for by environmental conditions—is characterized by another Gaussian channel. Again defining a rate distortion entropy as SR = R(D) − DdR∕dD permits definition of a more complicated nonequilibrium Onsager equation in the presence of an incoming system perturbation δ T as

(1.26)

having the equilibrium solution, where dD∕dt = 0,

(1.27)

The perturbation might be a sensory or regulatory signal, an incoming pathogen, a growing tumor, and so on.

For this simplistic model, the distortion between physiological need and actual physiological response is proportional to the perturbation. In reality, the final distortion measure D is the consequence of a vast array of internal processes that all contribute to it and that, individually, must all be optimized for the organism to survive. That is, overall distortion between total need and total response cannot be constrained by allowing critical subsystems to overload beyond survivable limits: Too high a blood pressure spike in response to a stress spike can be fatal.

A Less Simple Model

Consider individual responses of the interacting cognitive physiological systems, for the moment, to be a set of m independent but not identically distributed normal random variates having zero mean and variance σ i 2, i = 1… m. Following the argument of Sect. 10.3.3 of Cover and Thomas (2006), assume a fixed channel capacity R available with which to represent this random vector. How should we allot signal to the various components to minimize the total distortion D? A brief argument shows it necessary to optimize

![$$\displaystyle{ R(D) =\min _{\sum D_{i}=D}\sum _{i=1}^{m}\max \{1/2\log [\sigma _{ i}^{2}/D_{ i}],0\} }$$](computational-psychiatry.files/image059.png)

(1.28)

subject to the inequality restraint ∑i D i ≤ D.

Using the Kuhn–Tucker generalization of the Lagrange multiplier method necessary under inequality conditions (e.g., Nocedal and Wright 1999) gives

![$$\displaystyle{ R(D) =\sum _{ i=1}^{m}1/2\log [\sigma _{ i}^{2}/D_{ i}], }$$](computational-psychiatry.files/image060.png)

(1.29)

where Di = λ if λ < σ i 2 or D = σ i 2 if λ ≥ σ i 2, and λ is chosen so that ∑i D i = D.

Thus, even under conditions of “independence,” there is a complex “reverse water-filling” relation for Gaussian variables.

In the real world, the different subcomponents will engage in complicated crosstalk.

Assume m different subsystems that are not independent. Define a Rate Distortion function R(D1, …, Dm ) = R(D) and an associated Legendre transform “rate distortion entropy” SR having the Onsager-like form

(1.30)

The most direct generalization of Eq. (1.28) is

(1.31)

At equilibrium, all dDi ∕dt ≡ 0, so that it becomes necessary to minimize each Di under the joint constraints

![$$\displaystyle\begin{array}{rcl} \left [\sum _{j}L_{i,j}\partial S_{R}/\partial D_{j}\right ] + 1/\delta T_{i} = 0,& & \\ D_{i} \leq D_{i}^{\mathrm{max}}\,\forall i& &{}\end{array}$$](computational-psychiatry.files/image063.png)

(1.32)

remembering that R(D) must be a convex function.

The Di max represent limits on both internal and external distortion measures as needed for survival.

This is a complicated problem in Kuhn–Tucker optimization for which the exact form of the crosstalk-dominated R(D) is quite unknown, in the context that even the independent Gaussian channel example involves constraints of mutual influence via reverse water-filling . In sum, changing a single perturbation δ T i will inevitably reverberate across the entire system, necessarily affecting—sometimes markedly—each distortion measure that characterizes the difference between needed and observed physiological subsystem response to challenge.

Most importantly, there may, in fact, be no general solution having ![]() , that is, no possible Pareto surface defining the limits of optimality. Such failure of solution is precisely the punctuated accession to detection of that perturbation. The setting of the variates Di max represents the tuning of the system of interacting cognitive modules. More subtle tuning, in the presence of noise, leads to the final model.

, that is, no possible Pareto surface defining the limits of optimality. Such failure of solution is precisely the punctuated accession to detection of that perturbation. The setting of the variates Di max represents the tuning of the system of interacting cognitive modules. More subtle tuning, in the presence of noise, leads to the final model.

The Index Theorem Attack

The model of Eq. (1.31) admits unstable equilibria. Their elimination, and the imposition of more general tuning criteria, can be met by an appropriate system of stochastic Onsager differential equations, having the form

![$$\displaystyle\begin{array}{rcl} dD_{t}^{i} = [L_{ i}(t,\mathbf{D}) - 1/f_{i}(\mathbf{T})]dt +\sigma _{i}(t,\mathbf{D})dB_{t}^{i},& & \\ D_{i} \leq D_{i}^{\mathrm{max}}\,\forall i& &{}\end{array}$$](computational-psychiatry.files/image065.png)

(1.33)

where, again, the dBt i represent noise terms having characteristic quadratic variations (Protter 1990) and the fi (T) are monotonic increasing functions of the perturbation vector T = (δ T 1, …, δ T n ). As above, noise precludes unstable equilibria, and is thus quite as important as crosstalk, interpreted as the exaptation of both noise and crosstalk into physiological global broadcasts.

Thus both the vectors D ≡ (D 1, …, Dn ) and T provide tuning criteria under the stochastic Kuhn–Tucker optimization conditions that would generalize Eq. (1.32).

That is, D establishes thresholds for perturbation detection, and the fi (T) tune the sensitivity of the system across the perturbation vector T, determining what will be “looked for” under nominal circumstances. Amplified perturbations that resonate across the system, and cause some Di to exceed its Di max, enter the “theater of generalized consciousness” for the particular set of linked, crosstalking cognitive modules being brought into collaboration.

Note that Eq. (1.33) can be more simply expressed as

(1.34)

Then the stochastic Kuhn–Tucker optimization is across the system of equations

![$$\displaystyle\begin{array}{rcl} [\mathcal{L}_{i}(t,\mathbf{D},\mathbf{T})dt +\sigma _{i}(t,\mathbf{D})dB_{t}^{i}] = 0,& & \\ D_{i} \leq D_{i}^{\mathrm{max}}\,\forall i& &{}\end{array}$$](computational-psychiatry.files/image067.png)

(1.35)

at a fixed perturbation setting T.

By the network linkages inherent in the functions ![]() , a perturbation δ T k can influence more than just the distortion measure Dk . That is, a perturbation δ T k that does not trigger a particular Dk > Dk max may still resonate across the system’s crosstalk connections, violating an apparently distant Dj constraint, j ≠ k.

, a perturbation δ T k can influence more than just the distortion measure Dk . That is, a perturbation δ T k that does not trigger a particular Dk > Dk max may still resonate across the system’s crosstalk connections, violating an apparently distant Dj constraint, j ≠ k.

Equation (1.35) represents, then, a generalized index theorem, in the sense discussed above, in that—underlying the analytic conditions—there are particular topologies of interconnected dual information sources linked by crosstalk, as described earlier. The details, however, can become mathematically complicated (e.g., Glazebrook and Wallace 2009a,b).

This argument provides another approach, via necessary conditions imposed by the asymptotic limits of information theory, to empirical models for a broad spectrum of global broadcast phenomena that recruit individual cognitive modules into shifting cooperative arrays that have both tunable detection thresholds for perturbation and tunable sensitivities to perturbation. These dynamic rate distortion models are, again, analogous to empirical regression models based on the Central Limit Theorem. When optimization by an “unconscious” cognitive system fails—there is no Pareto surface—the signal is propelled, in a punctuated manner, into detection by the “spotlight” that represents accession to attention from a global broadcast of linked subsystems needed to address a problem too complex for any single submodule.

1.12 Discussion and Conclusions

Sections 1.9–1.11 explore related statistical models of cognitive global broadcasts, slightly different views of the same elephant, as it were. While useful for data analysis—analogous to the varieties of regression models—they also provide examples of Dretske’s imperative: the necessary conditions imposed by the asymptotic limit theorems of communication theory constrain cognitive process at all scales, levels of organization, and modes of distribution.

Information is a form of free energy instantiated by physical processes that themselves consume free energy, permitting adaptation of empirical approaches from nonequilibrium thermodynamics and statistical mechanics to cognitive phenomena. There are, however, restrictions imposed by the local irreversibility of information sources. Embedding high-level neural global broadcasts within a nested hierarchy of cognitive and other sources of impinging information evades the logical fallacy of attributing to “the brain” and the broad spectrum of functions that can only be embodied by the full individual-in-context.